Shaping of Systems

01 Mar 2026Background

I have always wondered why the system design as a discipline has lacked the much required structure, even after so many years have observed that both real life system design thinking as well as the system design interview process are completely thought process driven and less structured thinking & framework driven, the main reason seems to be due to the extremely diverse, along with fast, ever changing & evolving mechanisms used to shape the systems under different requirements, constraints, pressures & trade-offs.

Generally one framework would fail to capture the variety of problem & solution space, so the approach followed is more of a library of concepts, patterns & learnings along with the ability to choose wisely, through the analytical thought process which is grounded in experience but driven by problem solving, logical thinking & exploratory learning.

Functional Intent Facing Non-Functional Reality

Large & complex systems do not fail because engineers lack the tools or patterns. They fail because intent and reality are either unknown or misaligned from beginning or over time.

Most design mistakes originate from collapsing what a system exists to do into how it is implemented, or from treating non-functional requirements as secondary constraints rather than primary forces. Over time, successful systems converge toward similar structural shapes – not because of shared technology stacks or common learning, but because they respond to the same underlying real life pressures.

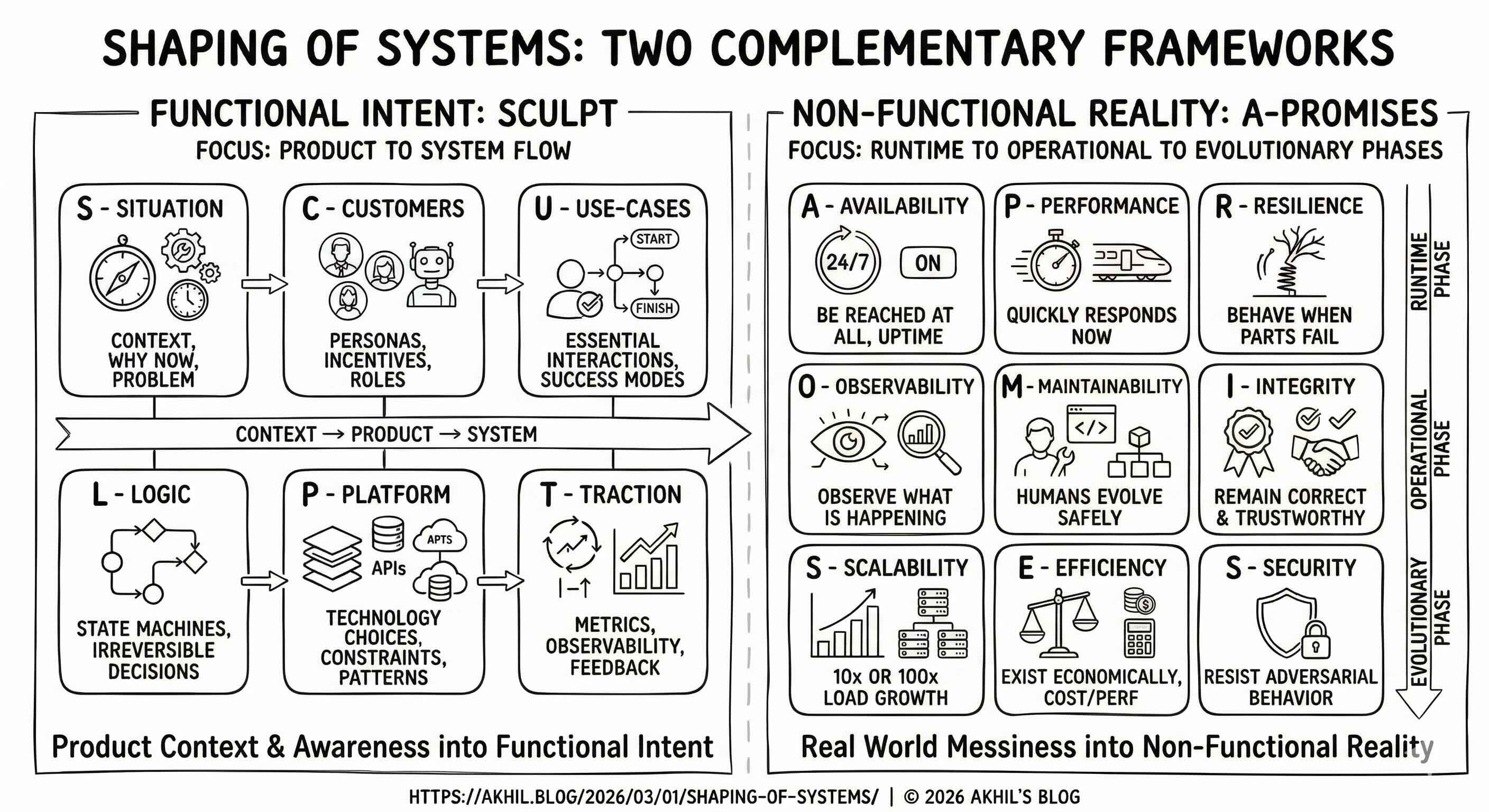

This essay proposes a way to reason about systems by separating functional intent from behavior under reality, then observing how their interaction determines architecture & design choices that shapes the system, and introduces two complementary lenses for reasoning about intent vs reality.

- SCULPT describes functional intent – what the system exists to do.

- A-PROMISES describes non-functional reality – how the system must behave to survive production.

Together, they can replace ad-hoc design intuition based only on experience with slightly more structured reasoning based intuition about tensions and trade-offs along with explicitly calling out assumptions.

Functional Intent: Why the System Exists

Every system is born to resolve a tension. Before databases, queues, caches, or APIs appear, there is a situation that needs to be addressed with the user experience in mind.

Across several domains, six recurring dimensions describe the functional intent. SCULPT is a simple framework to enforce disciplined reasoning – why before what, what before how, basically forces problem framing before design, and naturally transitions from product → system.

- Situation – the contextual pressure that makes the system necessary.

- Customers – the agents whose incentives shape behavior.

- Use-cases – the minimal set of interactions that must succeed.

- Logic – the irreversible decisions and state transitions.

- Platform – the mechanisms chosen to make the logic executable.

- Traction – the feedback loop that validates continued existence.

These dimensions are not a checklist, instead they describe a flow of causality: context produces users; users generate use-cases; use-cases demand logic; logic constrains platform; platform enables outcomes. To the contrary, when this flow is violated like when platform choices precede logic, or metrics precede intent, then systems become brittle.

| Core Question | High Level Areas | Important Questions | Blind Spots & Pitfalls |

|---|---|---|---|

| S – Situation Why does this system need to exist now, and what problem makes it unavoidable? |

Business context and strategic goals. Market or operational constraints. Regulatory or compliance boundaries. Explicit scope and out-of-scope definition. Time horizon and urgency. | What triggered this initiative – growth, failure, regulation, or opportunity? What happens if we build nothing? Who is the economic buyer and what do they measure? What is explicitly out of scope, and why? Is this a greenfield build or a migration from something existing? | Treating the situation as obvious and skipping straight to features. Defining scope so broadly that every solution looks valid. Ignoring regulatory or compliance constraints until they block deployment. Assuming the business context won’t change during the build. Confusing the stated problem with the actual problem – stakeholders often describe symptoms, not causes. |

| C – Customers Whose incentives, constraints, and behaviors will shape how the system must work? |

User personas and segments. Internal versus external consumers. Human versus machine callers. Power users versus casual users. Jobs-to-be-done and motivations | Who are the distinct user types, and how do their goals conflict? Which users generate load versus consume value? Are there machine callers (APIs, internal services) with different SLAs? What does the worst-case user look like (adversarial, confused, high-volume)? How do user segments grow or shift over time? | Designing for “users” as a monolith when segments have conflicting needs. Forgetting internal operators and support teams as first-class users. Ignoring the adversarial user – bots, scrapers, abusers. Optimizing for power users while alienating the majority. Assuming today’s user mix is tomorrow’s user mix. |

| U – Use-cases What are the essential interactions that must succeed for the system to justify its existence? |

Core use-cases that drive primary value. Secondary and edge-case scenarios. Failure scenarios as first-class requirements. Prioritization criteria. Read versus write path decomposition. | Which 2–3 use-cases would make this system a success if nothing else worked? What failure modes must be handled gracefully versus allowed to surface? Which use-cases change design decisions, and which are cosmetic? What does the cold-start experience look like? Are there time-sensitive use-cases (on-sale moments, peak hours)? | Listing features instead of problems. Including use-cases that don’t materially change design decisions. Ignoring failure and degradation as use-cases. Treating all use-cases as equally important instead of ruthlessly prioritizing. Designing for the happy path and discovering edge cases in production. |

| L – Logic What are the irreversible decisions, state transitions, and behavioral rules the system must enforce? |

State machines and lifecycle transitions. Read and write path separation. Synchronous versus asynchronous processing boundaries. Ordering, idempotency, and retry semantics. Workflow orchestration versus choreography. | What state transitions are irreversible, and what are the consequences of getting them wrong? Where does the system need strong consistency versus eventual consistency? Which operations must be synchronous (user-blocking) versus asynchronous (background)? How are concurrent mutations handled – last-write-wins, optimistic locking, CRDTs? What happens when a multi-step workflow fails midway? | Treating logic as “just business rules” when it’s actually distributed systems coordination. Assuming synchronous processing when asynchrony would reduce coupling. Ignoring idempotency until duplicate processing causes data corruption. Conflating workflow orchestration (centralized) with choreography (event-driven) without understanding trade-offs. Designing state machines implicitly instead of explicitly – leading to impossible states in production. |

| P – Platform What technology choices make the logic executable, and what constraints do they introduce? |

Data stores and access patterns. Caching layers and invalidation strategies. Messaging, streaming, and event infrastructure. Deployment topology and service boundaries. Third-party integrations and external dependencies. | What are the dominant access patterns – point lookups, range scans, full-text search, time-series? Does the data model favor relational, document, columnar, or graph storage? Where do caches add value, and what is the invalidation strategy? Are service boundaries aligned with team boundaries and deployment cadence? Which components are on the critical path versus best-effort? | Choosing technologies before understanding logic and access patterns. Introducing caching without a coherent invalidation strategy. Drawing microservice boundaries around technical layers instead of business capabilities. Underestimating the operational cost of every new technology in the stack. Treating third-party APIs as reliable when they are the most common source of production incidents. |

| T – Traction How will you know the system is succeeding, and what feedback loops drive iteration? |

Success metrics tied to business outcomes. Operational health indicators. Adoption and usage signals. Latency, error rate, and throughput SLOs. Feedback loops that inform product and engineering decisions. | What single metric would prove this system is working? Are there leading indicators that predict success before lagging metrics move? What SLOs will trigger engineering action when breached? How will you distinguish system failure from product failure? What instrumentation is needed from day one versus added later? | Defining metrics after launch instead of baking them into the design. Tracking vanity metrics that don’t connect to business outcomes. Missing the feedback loop – metrics that no one monitors or acts on. Conflating system health (latency, errors) with product health (engagement, conversion). Setting SLOs without understanding the cost of meeting them. |

Non-Functional Reality: How the System Survives & Thrives

Functional intent alone does not shape real systems but non-functional reality also intervenes through constraints that are always present but rarely obvious. Non-functional requirements are not “quality attributes” but rather are “underlying forces” to be reckoned with & thought through when building systems which can survive over space & time, and they can be thought of as the promises your system makes to its users/operators & breaking these promises generally has consequences.

Across several domains, there are few recurring dimensions that describe non-functional reality in a comprehensive way. The acronym A-PROMISES forces explicit trade-offs instead of implicit assumptions, with intentional ordering of business survivability first, infra rigor second, focusing on increasing severity gradient - first survive, then operate, then guarantee correctness, then grow sustainably, then defend.

- Availability – whether the system can be reached at all.

- Performance – how quickly the system responds now.

- Resilience – how it behaves when parts fail.

- Observability – what is happening can be observed.

- Maintainability – whether humans can evolve it safely.

- Integrity – whether it remains correct and trustworthy.

- Scalability – what happens when load grows 10x or 100x.

- Efficiency – whether it can exist economically.

- Security – whether it resists adversarial behavior.

This also follows runtime → operational → evolutionary phase / stage wise priorities cleanly, which is generally the common prioritization flow across many system life-cycles.

| Phase | Concerns | Dimensions |

|---|---|---|

| Runtime | Can users reach it? How fast? What when it breaks? | A - Availability, P - Performance, R - Resilience |

| Operational | Can you see what’s happening? Can you change it safely? Is it correct & trustworthy? | O - Observability, M - Maintainability, I - Integrity |

| Evolutionary | Does it scale? Can it exist economically? Does it resist attack and abuse? | S - Scalability, E - Efficiency, S - Security |

Generally most of the architectural decisions are a compromise among these dimensions – when a system favors availability, it weakens integrity, when it optimizes performance, it often sacrifices maintainability, similarly observability is runtime architectural necessity, cannot be an operational afterthought. These trade-offs are unavoidable; denying them only hides them until failure hits.

| Core Question | High Level Areas | Important Questions | Blind Spots & Pitfalls |

|---|---|---|---|

| A – Availability Can users reach the system when they need it? |

SLOs, SLAs, and uptime targets. Fault tolerance and redundancy. Graceful degradation strategies. Fail-open versus fail-closed policies. Dependency availability chains. | What availability target is required (99.9%, 99.99%), and what does the gap cost? Is partial availability acceptable, or is it all-or-nothing? Should the system fail-open (allow uncertain requests) or fail-closed (block when uncertain)? Which dependencies are on the critical path, and what is the compound availability? What is the planned maintenance strategy – rolling updates, blue-green, canary? | Quoting an SLO without understanding the error budget math. Assuming availability of dependencies without measuring compound probability. Treating all features as equally critical – not all paths need the same uptime. Ignoring regional or network-level failures in availability planning. Designing for steady-state availability but not for recovery speed after failures. |

| P – Performance How fast does the system respond under current load, and how predictable is that response? |

Latency targets across percentiles (P50, P95, P99). Throughput capacity. Tail latency behavior and amplification. Synchronous versus asynchronous path performance. User-perceived versus system-measured performance. | What latency is acceptable at P50 versus P99, and who defines those targets? Where does tail latency amplification occur (fan-out, dependent calls, GC pauses)? Is the bottleneck compute, I/O, network, or coordination? What is the difference between system latency and user-perceived latency? Are there batch versus real-time paths with different performance profiles? | Optimizing average latency while ignoring P99 tail behavior. Measuring server-side latency but missing client-perceived delays (DNS, TLS, rendering). Assuming linear performance scaling when real systems have cliffs. Tuning for throughput at the expense of latency variance. Confusing “it’s fast on my machine” with “it’s fast under production load.” |

| R – Resilience What happens when things break – does the system degrade gracefully or cascade into failure? |

Failure isolation & blast radius containment. Retry strategies, backoff, & circuit breakers. Dependency failure handling. Disaster recovery & business continuity. Graceful degradation modes. | What is the blast radius of each component’s failure? How does the system behave when a dependency is slow versus down? Are retries safe, or do they amplify failure (retry storms)? What is the disaster recovery strategy – active-active, active-passive, backup-restore? How long can the system operate in a degraded mode before it becomes unacceptable? | Confusing resilience with availability – “it’s up” is different from “it handles failure well.” Implementing retries without backoff or jitter, causing cascading overload. Missing the difference between a dependency being down (fast failure) versus slow (resource exhaustion). Testing happy-path resilience but never practicing disaster recovery. Assuming the network is reliable, latency is zero, and bandwidth is infinite. |

| O – Observability Can you understand what the system is doing, why it’s misbehaving, and where to intervene? |

Structured logging with correlation. Distributed tracing across service boundaries. Metrics pipelines and dashboards. Alerting strategies and escalation. Debuggability and root-cause analysis tooling. | Can you trace a single request across every service it touches? What is the cardinality cost of your metrics – are you tracking enough dimensions without exploding storage? How quickly can an on-call engineer diagnose a novel failure? Are alerts actionable, or do they create noise that gets ignored? What is the observability cost as a percentage of infrastructure spend? | Treating observability as “we have logging” when logging without structure is just noise. Adding distributed tracing after the architecture is set, making instrumentation painful and incomplete. Alert fatigue from thresholds that fire on non-actionable conditions. Underestimating observability infrastructure cost – it often becomes 10–30% of total spend. Building dashboards that show system health but not business health. Observability that works in steady state but fails during the incidents when you need it most. |

| M – Maintainability Can humans safely evolve, deploy, and operate this system over its lifetime? |

Deployment safety – blue-green, canary, rollback. Schema evolution and backward compatibility. Feature flagging and progressive rollout. Operational runbooks and incident playbooks. Team cognitive load and onboarding cost. | How long does it take a new engineer to make a meaningful contribution? Can you deploy to production without fear – is rollback fast and safe? How are database schema changes handled without downtime? Is the system’s complexity proportional to the problem it solves? What is the bus factor – how many people truly understand the system? | Optimizing for build speed while ignoring the maintenance tax that follows. Assuming the team that built it will maintain it forever. Designing schemas that are efficient today but impossible to migrate tomorrow. Accumulating operational debt – no runbooks, no playbooks, tribal knowledge only. Measuring engineering productivity by feature velocity without accounting for incident burden. |

| I – Integrity Is the data correct, consistent, and trustworthy at all times? |

Consistency model selection (strong, eventual, causal). Idempotency guarantees. Write ordering and conflict resolution. Data durability and corruption prevention. Audit trails and data lineage. | What consistency model does each use-case actually require – are you paying for strong consistency where eventual would suffice? Which operations must be idempotent, and how is idempotency enforced (keys, deduplication, deterministic logic)? How are conflicting concurrent writes resolved – last-write-wins, merge, reject? What is the data durability guarantee, and how is it validated (not just assumed)? Can you prove the system state is correct after a failure – is there an audit trail? | Defaulting to strong consistency everywhere when most reads tolerate staleness. Assuming idempotency without explicitly designing for it – duplicate processing is the most common data corruption source. Conflating durability (data is saved) with integrity (data is correct). Ignoring ordering constraints until race conditions corrupt production data. Treating consistency as a database property when it’s actually a system-wide design decision spanning caches, queues, and services. |

| S – Scalability What happens when load grows 10x or 100x – does the architecture bend or break? |

Horizontal versus vertical scaling strategy. Sharding and partitioning approaches. Stateless versus stateful service design. Back-pressure and load shedding mechanisms. Growth modeling and capacity planning. | What is the expected growth trajectory – linear, exponential, or spiky? Which components are stateful, and how does state limit horizontal scaling? What is the sharding or partitioning strategy, and what happens when you need to re-shard? Where are the scaling bottlenecks – databases, coordination points, shared resources? How does the system shed load gracefully when capacity is exceeded? | Confusing performance with scalability – a system can be fast today and break at 10x. Designing stateful services without a plan for state redistribution. Choosing a sharding key that creates hot spots under real-world access patterns. Scaling compute without scaling the data layer, creating new bottlenecks. Assuming cloud auto-scaling solves the problem when the real constraint is architectural (shared locks, single-writer, coordination). |

| E – Efficiency Can the system exist economically at the scale it needs to operate? |

Cost per request, per user, and per transaction. Resource utilization and waste. Infrastructure cost scaling curves. Build versus buy trade-offs. Unit economics and margin impact. | What does it cost to serve one request, one user, or one transaction – and how does that scale? Where is the system wasting resources – over-provisioned instances, unused storage, redundant processing? Is the cost curve linear, sub-linear, or super-linear with growth? What is the total cost of ownership including operations, on-call, and incident response? Are there architectural choices that trade higher upfront cost for lower marginal cost? | Ignoring cost until the monthly bill arrives and triggers an emergency optimization sprint. Optimizing compute cost while ignoring data transfer, storage, and third-party API costs. Treating efficiency as purely an infrastructure concern when architectural choices (fan-out, replication factor, retention) dominate spend. Over-engineering for efficiency at low scale when engineering time is more expensive than infrastructure. Failing to model cost scaling curves – many systems are cheap at launch and unsustainable at target scale. |

| S – Security How does the system resist abuse, compromise, and unauthorized access? |

Authentication and authorization models. Data privacy and encryption (at rest, in transit). Abuse prevention and rate limiting. Tenant isolation in multi-tenant systems. Threat modeling and attack surface management. | What is the authentication model, and how are tokens/sessions managed and revoked? How is authorization enforced – at the gateway, service level, or data level? What data is sensitive (PII, financial, health), and how is it classified and protected? How is the system protected against abuse – bots, scraping, credential stuffing, DDoS? In a multi-tenant system, how is tenant data isolated, and what happens if isolation breaks? | Treating security as a bolt-on audit instead of a structural design property. Implementing authentication without thinking about authorization granularity. Encrypting data in transit but leaving it unencrypted at rest (or vice versa). Designing abuse prevention reactively instead of building it into the admission path. Assuming tenant isolation is guaranteed by application logic when infrastructure-level leaks are possible. Ignoring supply-chain security – dependencies, CI/CD pipelines, and secrets management. |

How Functional Intent Interact Non-Functional Reality ?

System architecture & design emerges at the intersection of what must be done (intent) & what cannot be avoided (reality). SCULPT ensures building the right system while A-PROMISES ensures that system survives reality. SCULPT shows problem shaping and product awareness while A-PROMISES shows real world constraints creating pressures & challenges.

Few Structural Principles That Emerge

- Irreversible actions demand early, explicit gating. Wherever a decision cannot be undone – admitting a request, selling a seat, confirming a write, the system must decide before work begins, not after. Late enforcement means the damage is already done. This is why rate limiters sit at the ingress, ticketing systems lock inventory before payment, and KV stores require quorum acknowledgment before confirming writes. The earlier the gate, the smaller the blast radius. The corollary: every gate must declare its assumptions explicitly, because implicit assumptions in irreversible paths become production incidents.

- Latency shapes trust more than correctness. Users forgive stale data faster than they forgive slow responses. A home listing platform showing slightly outdated prices in 200ms builds more confidence than one showing perfect prices in 2 seconds. A KV store with predictable P99 latency gets adopted; one with better average latency but occasional 5-second spikes gets replaced. This is counterintuitive for engineers who optimize for correctness first, but perception is the product. Latency is not a technical metric – it is a user-facing promise.

- Asynchrony is earned by tolerating delay; synchrony is reserved for irreversibility. Systems do not “choose” async for performance. Asynchrony appears precisely where correctness can tolerate temporal delay – ranking pipeline updates, index refreshes, replication propagation. Synchrony persists wherever the cost of a stale or wrong decision is unrecoverable – token accounting in a rate limiter, seat reservation in ticketing, leader election in a KV store. The boundary between sync and async is not a performance optimization; it is a correctness boundary.

- State machines emerge wherever reversibility disappears. When a system cannot undo a transition – available → reserved → sold, or proposed → committed → replicated – implicit state tracking silently introduces impossible states. Explicit state machines make transitions auditable, retries safe, and failures recoverable. Ticketing systems need them because overselling is catastrophic. KV stores need them because replication state determines data safety. The absence of an explicit state machine is not simplicity; it is hidden complexity waiting to surface.

- Caches exist wherever speed outweighs freshness but invalidation determines whether they help or hurt. Every cache is a bet that stale data is acceptable for some window. Home listing availability caches bet that a few seconds of staleness is worth sub-100ms lookups. Rate limiter local caches bet that approximate counts are worth avoiding network round-trips. The cache itself is easy. The invalidation strategy – when staleness becomes unacceptable is where systems break. A cache without an explicit invalidation contract is a consistency bug with a delay timer.

- Partial availability beats total failure in almost every system but the boundary must be designed, not discovered. A home listing platform returning cached results during a ranking outage is degraded but useful. A rate limiter in fail-open mode during a Redis outage is imperfect but survivable. The principle is universal, but the boundary – which functions degrade, how far, and what the user sees – must be an explicit design decision. Systems that discover their degradation modes in production discover them badly. The question is never “should we degrade gracefully?” – it is “what does graceful look like, and have we tested it?”

- Observability is a prerequisite for every other property, not a feature added after them. You cannot improve availability that you cannot measure. You cannot debug resilience failures you cannot trace. You cannot optimize efficiency you cannot attribute. Across all four systems, observability determines whether other properties are real or aspirational. A KV store without partition-level metrics cannot rebalance safely. A ticketing system without end-to-end purchase tracing cannot diagnose allocation failures. Observability is not instrumentation – it is the ability to ask novel questions about system behavior and get answers fast enough to act.

- Systems fail socially before they fail technically. The rate limiter that no one knows how to reconfigure safely. The ranking pipeline that only one engineer understands. The KV store cluster that no one has practiced recovering from a partition. Technical failures have technical fixes. Organizational failures – knowledge silos, missing runbooks, untested recovery procedures, schemas that can’t evolve – compound silently until they become the actual cause of outages. Maintainability is not a quality attribute; it is the system’s immune system.

These principles are not just patterns to help pick an existing solution to be reused to speed up design; but they are outcomes of pressure seen in many system problems to be re-applied.

Designing Systems, Not Deriving Solutions

The mistake in many system designs is treating design & architecture as composition rather than response. Systems are not assembled from design pieces picked from the past; they are shaped.

- Functional intent defines why a system must exist. Non-functional reality defines how it is allowed to exist. Architecture generally is the compromise between the two.

- Frameworks and diagrams are useful only insofar as they help surface these tensions. When they obscure them, they become harmful.

- A well-designed real life system does not appear simple & elegant, instead it appears inevitable because every part exists for a reason that reality enforces.

- Good systems are not optimized on every dimension, they mirror reality–about constraints, about failure, and about trade-offs.

- System design, at its core, is not about inventing structures, it is about recognizing which structures reality will eventually force – and getting there deliberately with thinking, rather than accidentally with iterations of trial & error.

Four real life examples

Consider four systems that appear unrelated mostly: a rate limiter, a home listing platform, an event ticketing system, and a key-value store, even though their surface areas differ, but their shapes are governed by the same underlying pressures, constraints & laws. Before applying the frameworks, it’s important to know what makes each problem fundamentally different.

| Dimension | Rate Limiter – Admission Control as a First-Class System | Home Listing Platform – Discovery Under Uncertainty | Event Ticketing – Atomic Allocation Under Contention | Key-Value Store – Predictable Semantics at Scale |

|---|---|---|---|---|

| Problem | Shared infrastructure must survive uncoordinated demand, and protection must occur before damage propagates. | Discovery must connect exploratory intent with volatile supply, while preserving marketplace balance. | Scarce inventory under synchronized demand requires deterministic allocation, not best-effort throughput. | Latency-sensitive access with simple semantics becomes complex only because of scale and failure. |

| Why Is It Hard ? | Damage propagates faster than detection. Shared infrastructure cannot distinguish malicious intent from accidental overload in real time. The only safe option is to control admission before work is done. | Discovery systems must tolerate ambiguity – users do not know what they want, supply changes constantly, and relevance is probabilistic. This forces a fundamental separation: retrieval is not ranking, and ranking is not presentation. | Demand is synchronized, inventory is finite, and failure is irreversible. Overselling is not a degraded state – it is a fatal one. This forces the system into explicit state machines where time (TTLs) becomes a first-class concept. | The interface is trivial; the reality is not. Distribution introduces unavoidable conflicts: consistency versus availability, durability versus latency, coordination versus throughput. These tensions produce canonical structures that no shortcut can avoid. |

| Defining Architectural Property | Synchronous gating at the ingress. Because admission decisions are irreversible, latency constraints are extreme. Because fairness requires memory, shared state is unavoidable. Because blocking the wrong request may be worse than allowing a bad one, availability and correctness remain in permanent tension. | Multi-stage pipelines: constraint filtering, candidate generation, re-ranking, and assembly. Asynchrony appears because freshness cannot block responsiveness. Caching appears because perceived latency defines trust. Availability favors partial correctness – showing something reasonable now beats everything perfectly later. | Conservative architecture by necessity. Performance is subordinate to predictability. Resilience focuses on replay and compensation. Integrity dominates all other concerns. The architecture privileges correctness over throughput and fairness over speed. | A machine for containing complexity, not eliminating it. Routing minimizes coordination. Replication manages failure. Compaction manages cost. The architecture exists because no single-node solution survives the combination of scale, failure, and latency requirements. |

| Key Insight | The architecture is minimal but rigid: early placement, fast state access, deterministic decisions. It does not evolve toward flexibility; it evolves toward predictability. | Integrity becomes perceptual rather than absolute. The system’s correctness is measured by user satisfaction, not by data precision alone. Partial correctness is a feature, not a bug. | Complexity is accepted because simplification would destroy trust. Every shortcut in this domain has a name: it’s called “overselling.” | Maintainability becomes existential. The system will outlive its creators. Tooling and observability are not auxiliary features; they are survival mechanisms. |

Applying the framework to the four examples

The following tables apply SCULPT and A-PROMISES to each example. Each cell captures the design reasoning - not so much implementation details, but more of tensions & decisions that shape architecture.

SCULPT – Functional Intent

| SCULPT | Rate Limiter | Home Listing Platform | Event Ticketing | Key-Value Store |

|---|---|---|---|---|

| S – Situation | Shared infrastructure must survive uncoordinated demand. Abuse and accidental overload are indistinguishable at runtime. Protection must occur before downstream damage propagates – reactive detection is too late. | Discovery must connect demand with sparse, volatile supply. User intent is exploratory and imprecise, not transactional. Marketplace health depends on balanced exposure – favoring any side destroys liquidity. | Demand is highly synchronized and often adversarial (bots, scalpers). Overselling is catastrophic and publicly irreversible. Perceived fairness matters as much as allocation correctness – unfairness damages brand trust. | Many systems depend on predictable, low-latency data access. The store acts as a foundational infrastructure primitive – its failures cascade everywhere. Horizontal scaling with predictable behavior is non-negotiable. |

| C – Customers | Callers vary widely in intent, quality, and trust level. Internal consumers assume stability and fast failure; external consumers assume nothing. Security and operations teams require real-time visibility and policy control. | Seekers optimize for relevance and speed of discovery. Suppliers (owners, agents) optimize for visibility and yield. Internal ranking and pricing systems optimize for marketplace leverage and long-term health. | Buyers optimize for success probability – they want certainty, not options. Organizers optimize revenue, fairness, and brand reputation. The platform itself optimizes throughput and trust simultaneously. | Callers expect uniform, well-documented semantics regardless of scale. Platform teams expect operability, debuggability, and safe upgrades. Workloads range from low-latency point lookups to high-throughput batch scans. |

| U – Use-cases | Enforce fairness without centralized coordination. Absorb traffic bursts without penalizing steady-state callers. Degrade gracefully under overload – shedding load is better than crashing. | Narrow large candidate sets efficiently based on multi-dimensional intent. Support iterative refinement as users clarify what they want. Surface supply without overwhelming users – relevance over completeness. | Allocate scarce inventory atomically under extreme contention. Handle demand spikes deterministically – no probabilistic allocation. Support the full post-purchase lifecycle: cancellation, transfer, refund. | Support fast point lookups as the primary access pattern. Tolerate concurrent reads and writes without coordination overhead. Scale access horizontally without adding semantic complexity. |

| L – Logic | Convert continuous demand into discrete token allowances. Make admission decisions synchronously under uncertainty – there is no time for deliberation. Maintain correctness under high concurrency without distributed locking. | Transform raw listings into ranked, personalized candidates. Combine geo-spatial, preference, and policy constraints in a composable pipeline. Maintain stable ordering across user interactions to preserve trust. | Temporarily reserve inventory under contention using TTL-based locks. Transition inventory through well-defined, auditable states (available → held → sold). Guarantee irreversible finalization – once sold, the seat cannot be double-allocated. | Map keys to partitions deterministically using consistent hashing. Coordinate replicas under failure while preserving the chosen consistency model. Resolve conflicts predictably – last-write-wins, vector clocks, or application-level merge. |

| P – Platform | Fast shared state store with atomic counters (Redis, in-memory). Placed on the critical ingress path – gateway, sidecar, or middleware. Regional versus global enforcement based on consistency requirements. | Hybrid of index-based retrieval (Elasticsearch, OpenSearch) and caching layers. Asynchronous enrichment and ranking pipelines. Strong separation of read path (search, browse) and write path (listing updates). | Strongly consistent transactional core for inventory management. Fast cache layer for availability lookups to reduce database pressure. External payment and notification services on the critical purchase path. | Partitioned storage engine with log-structured writes (LSM trees). Replication protocol defining the consistency-availability trade-off. Cluster membership and coordination service for topology management. |

| T – Traction | Reduction in cascading downstream failures. Stable and predictable latency under variable load. Measurable improvement in caller experience – fewer timeouts, fewer retries. | Improved discovery efficiency – higher engagement per search session. Healthier supply-demand matching measured by contact and conversion rates. Time-to-first-meaningful-result as a leading indicator. | Successful allocation rate under peak synchronized demand. Lower drop-off during checkout – a proxy for system reliability and UX clarity. Trust in the platform’s fairness, measured by repeat usage and complaint rates. | Predictable latency envelopes maintained across percentiles. High availability under node churn, traffic spikes, and cluster operations. Widespread adoption across internal services – the ultimate vote of confidence. |

A-PROMISES – Non-Functional Reality

| A-PROMISES | Rate Limiter | Home Listing Platform | Event Ticketing | Key-Value Store |

|---|---|---|---|---|

| A – Availability | Protection must not become a single point of failure. The fail-open versus fail-closed decision is fundamental – incorrect blocking may be worse than temporary overload. Partial protection (some rules enforced) is better than total protection failure. | Partial search results preserve user momentum better than empty pages. Cached fallback paths ensure read availability even during index lag. Read-path availability is prioritized over write-path freshness. | Purchase path availability strictly dominates browsing availability. Queue-based admission control prevents uncontrolled concurrency from degrading the critical path. Failure semantics must be explicit and final – ambiguous failures are worse than clear rejections. | Availability competes directly with consistency – the CAP theorem is not theoretical here. Callers must understand availability guarantees without reading documentation. Maintenance operations (upgrades, rebalancing) must not disrupt active traffic. |

| P – Performance | Admission decisions must complete faster than the downstream calls they protect – if the limiter adds significant latency, it defeats its purpose. Tail latency directly impacts caller success and retry behavior. Hot-key concentration is unavoidable and must be engineered for. | Perceived latency shapes user trust more than actual system latency. Fast feedback loops (instant filter updates, smooth pagination) matter more than completeness. Sub-300ms search latency is the threshold below which users feel the system is responsive. | Latency spikes during on-sale moments translate directly to lost sales and customer frustration. Predictability matters more than raw speed – consistent 200ms is better than variable 50–500ms. Tail latency at P99 defines the user experience for the most engaged buyers. | P99 read and write latency defines the store’s usability as infrastructure. Throughput must scale linearly with added capacity – sub-linear scaling is an architecture problem. Latency variance is more damaging than latency mean – callers set timeouts based on worst-case expectations. |

| R – Resilience | Dependency failures (shared state store outages) are common, not exceptional – the system must have a degraded-mode plan. Recovery must be automatic and fast – manual intervention during an outage compounds the problem. Bypass rules for known-safe traffic can prevent false positives during limiter degradation. | Ranking service failures must not block basic search – retrieval and ranking degrade independently. Search index lag is inevitable; the read path must tolerate stale data without corrupting user experience. Backpressure from listing update storms must not cascade into search latency. | External payment gateways will fail mid-transaction – the purchase flow must be replay-safe and compensating. Demand spikes must be absorbed through controlled admission, not allowed to amplify into cascading failures. State transitions must be idempotent so that retries after partial failures don’t corrupt inventory. | Individual nodes fail routinely at scale – this is normal operations, not an incident. Re-replication speed after node loss determines the window of vulnerability. Network partitions must resolve toward a predictable, documented state – split-brain is existential. |

| O – Observability | Operators must see in real time which policies are firing, which tenants are being throttled, and whether the limiter itself is healthy. Debugging must be deterministic – given the same state and request, the decision must be reproducible. Metrics must distinguish between legitimate throttling (working correctly) and false positives (harming good traffic). | Search pipeline observability must span retrieval, ranking, and assembly – partial visibility produces misleading root causes. Ranking experiments require isolated metrics to detect regressions without cross-contamination. Listing freshness and index lag must be visible as leading indicators, not discovered during user complaints. | End-to-end purchase tracing from admission through payment to confirmation is non-negotiable. Seat allocation decisions must be auditable after the fact – “why did this person not get a ticket?” must be answerable. Real-time dashboards during on-sale events must show queue depth, allocation rate, and failure reasons. | Request-level tracing must cross client, routing, replica, and storage engine boundaries. Cluster-level metrics (replication lag, partition balance, compaction backlog) define operational health. Capacity forecasting depends on observability data – without it, scaling is reactive and expensive. |

| M – Maintainability | Rate limiting policies change faster than code deploys – dynamic configuration without restarts is essential. Per-tenant and per-endpoint policies must be composable without combinatorial explosion. Operational tooling must support safe policy rollout with rollback. | Ranking model iteration speed directly determines competitive advantage – slow experimentation means stale relevance. Feature flagging must be granular enough to test ranking changes on user segments without global risk. Debuggable search pipelines require clear stage boundaries and intermediate result inspection. | Business rules (pricing tiers, fee structures, allocation policies) vary per event and change frequently. Schema migrations for inventory and transaction tables must be zero-downtime. Operational dashboards must scale with the number of concurrent events – per-event isolation without per-event engineering effort. | Cluster rebalancing must be automated, non-disruptive, and observable. Schema evolution (new data formats, compression changes) must be backward compatible across cluster versions. Humans must be able to understand cluster state without reading code – operational tooling is a survival requirement. |

| I – Integrity | Token and counter accounting must be accurate under concurrency – miscounts silently erode protection or fairness. Race conditions in allowance checking are more dangerous than outages because they fail silently. Window boundary semantics (fixed versus sliding) must be provably correct – subtle bugs here are nearly undetectable. | No duplicate listings should appear in results – deduplication failures confuse users and erode trust. Pricing and availability must be consistent between search results and detail pages – stale data creates broken promises. Ranking inputs (signals, features) must be consistent across pipeline stages to produce stable, explainable ordering. | No overselling under any condition – this is the single inviolable invariant. Ticket allocation must be exactly-once, provable, and auditable after the fact. Seat map representation must be authoritative – discrepancies between what users see and what the system knows are catastrophic. | The chosen consistency model (strong, eventual, causal) defines the system’s contract with callers – violating it silently is worse than downtime. Write ordering correctness must be maintained through failures, not just during steady state. Data corruption prevention (checksums, write validation) is foundational – undetected corruption propagates irreversibly. |

| S – Scalability | Hot-key concentration creates non-uniform load that defeats naive horizontal scaling. Cross-region enforcement requires either state replication (costly, consistent) or regional independence (cheaper, approximate). Global rate limiting at millions of QPS requires sharding the counter space without losing fairness. | Index growth competes with query latency – larger indexes mean slower searches unless partitioned carefully. Re-ranking cost grows with candidate set size – relevance versus latency is a scaling trade-off. User growth amplifies both read load (searches) and write load (interactions, signals), but asymmetrically. | On-sale traffic spikes are extreme and brief – auto-scaling is too slow; capacity must be pre-provisioned or absorbed through queuing. Seat-level contention increases super-linearly with concurrent buyers on popular events. Multi-event concurrency requires isolation – one popular event’s spike must not degrade the platform for others. | Partitioning strategy determines the system’s scaling ceiling – re-sharding after the fact is a major operational event. Adding nodes must increase capacity proportionally without redistribution storms. Write amplification from replication and compaction grows with scale – efficiency and scalability are directly coupled. |

| E – Efficiency | Memory versus precision is the fundamental trade-off – exact counting costs more than approximate (e.g., probabilistic structures). Hot-key mitigation (replication, local caching) has direct infrastructure cost. The cost of protection must remain a small fraction of the cost of the services being protected. | Index storage versus query speed trade-off defines infrastructure spend. Compute-heavy re-ranking versus aggressive caching represents the core efficiency tension. The cost of serving one search – indexing, retrieval, ranking, assembly – defines unit economics. | Inventory lock contention drives infrastructure cost during spikes – pessimistic locking is expensive but safe. Queueing infrastructure trades latency for stability, adding cost even when not at peak. Payment processing fees are fixed per transaction – optimizing conversion rates directly improves unit economics. | Storage amplification from LSM compaction is the hidden dominant cost. Write amplification from replication multiplies every write by the replication factor. Cost per request and cost per stored GB must both remain bounded as the cluster grows. |

| S – Security | Attack traffic mimics legitimate traffic – signature-based detection is insufficient. Identity spoofing (forged API keys, rotated IPs) defeats naive per-caller limits. Tenant isolation in multi-tenant limiters must prevent one caller from consuming another’s quota. | Scraping and data harvesting affect marketplace health beyond just data theft. Privacy controls determine what listing information is visible to whom and under what conditions. Fraudulent listings (fake properties, bait-and-switch pricing) require detection without blocking legitimate supply. | Bot protection and anti-scalping controls are table-stakes – without them, fairness is fictional. Payment security (PCI compliance, tokenization) raises the stakes of any breach. PII protection for buyer data must survive the full lifecycle: collection, storage, processing, and deletion. | Shared multi-tenant infrastructure magnifies the blast radius of any security breach. Access control boundaries between tenants must be enforced at the storage level, not just the application level. Encryption at rest and in transit is foundational – it cannot be deferred to “later.” |

SCULPT ensures you build the right system by forcing problem framing before solutioning. A-PROMISES ensures the system survives reality by making trade-offs explicit rather than implicit. Together, they form a thinking framework or easy to remember mental model which can help with designing complex systems.